This is a little story about nothing ventured; nothing gained. One day, I got a LinkedIn message asking if I would like to teach a Big Data Bootcamp at an event for the Universidad Abierta Para Adultos in Santiago de Caballeros, República Dominicana. Luis didn’t know me; he just saw my profile and saw that I’ve been working in the field for a long time. I didn’t know anything about the tech scene in the Dominican Republic and I don’t speak Spanish. But, to teach is to learn twice so to teach people who can’t understand you is probably like learning 22.

Probably.

The Challenge

We talked for a while about what his hopes and goals were for the Big Data Bootcamp and I touched on my considerable shortcomings. We discussed how the Dominican Republic has three major industries where technology is a factor: travel, banking, and electricity. I’ve worked for financial institutions as well as a major utility implementing this technology, so I felt on pretty solid experiential ground. I never worked in the travel industry; but I lived in Orlando for a while. So there’s that. We discussed in particular how the banking system was conservative and resistant to change. I have a lot of experience with organizations that are justifiably wary of reputational risk and that the burden is on technology to prove its business value, not the other way around. Trust is slowly earned by smart code.

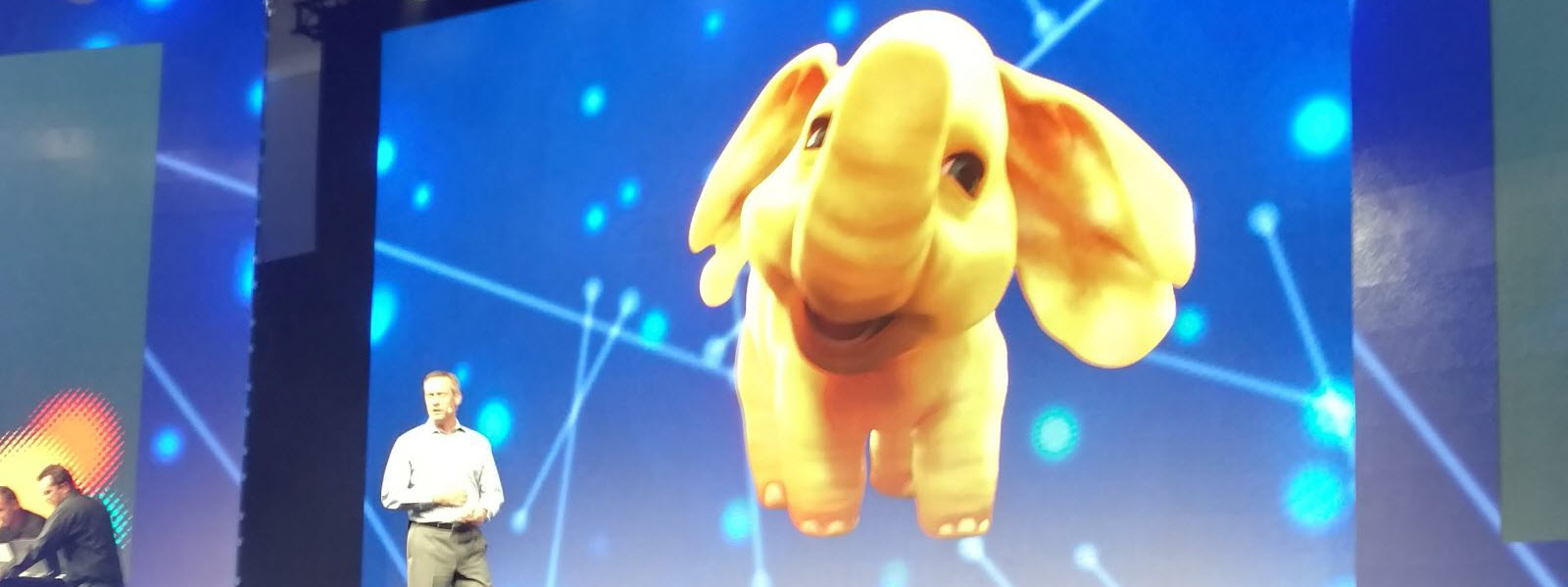

We talked about the challenges developers face working on projects both in the Dominican Republic and abroad. I wanted to give the students the tools they needed to be a little bit ahead of the curve so they would stand out in a field of applicants. Going to the cloud for new Big Data projects is pretty standard, but the need for hybrid cloud solutions will become the rule rather than the exception in the near future. I feel the same way about orchestrating containers. I want them to think beyond Hadoop when they think of Big Data. Conflating the two leads to an unnecessary Ship of Theseus problem as we swap out MapReduce for Spark and Yarn for Mesos. I also wanted them to start building the muscle memory it takes to be a team player by working on good habits. I use Gitflow workflow on personal projects.

The Opportunity

I put together a presentation called A Practical Guide to A Career in Modern Data. These posts follow a series of labs; the goals of which is to (hopefully) form a framework the students can use for self-guided study and for the university to build out their own class or bootcamp or whatever they would like. There are other resources out there; the internet is a big place. The goal is to hopefully collaborate so that this course becomes their course.

I want the students to challenge my direction and change my course during this bootcamp. I have my own personal and professional experiences that will naturally play a dominant role in how I perceive these lessons should flow. Even if I tried to do the opposite of everything I normally do; bias exists. There are some principals that I consider foundational: Code not in a repository is myth; Code that is not testable is detestable. Beyond that lies debate. I was there less than a week and I changed the course and direction a half a dozen times based on student feedback. And each was a change for the better.

Big Data Bootcamp Next Steps

The prerequisites for the Big Data Bootcamp will get you acquainted with all of the tools you will need. Docker, GitHub, Google Cloud Platform, Amazon Web Services, Microsoft Azure. You can use either Java or Python. I use Python in the examples but I tend to think in Java, so you’ll have that to deal with. You will need a credit card to open the cloud accounts but we will do everything on the free tiers. Ideas make money, not the other way around.

This is a amazing blog.

Big Data and Hadoop Online Training

Excellent and informative post. Continue to post. Thank you for revealing.

Oracle Fusion SCM Online Training