The following is the first in a series of blogs on Power Platform Dataflow.

ETL, Data Integration, and Data preparations are the backbone of any business application. Enterprises today generate a massive amount of data in their day-to-day operation. Moreover, this data is messy and comes from different sources and locations, each with its unique structuring standards. These make Data integration the most difficult, time-consuming, and expensive task.

On the other hand, Power Automate, the component of the Power Platform, is capable of connecting to a range of data sources and can integrate the data to a major extent. However, connectors in Power Automate have a defined threshold for throttling limits on the number of API calls per minute or day. Moreover, flows are relatively slower as they perform row-wise operations.

The new dataflow capability in the Power Platform intends to reduce cost and time and make it easier for citizen developers to aggregate and prepare the data for visualizations, analytics, and business intelligence.

What is Power Platform Dataflow?

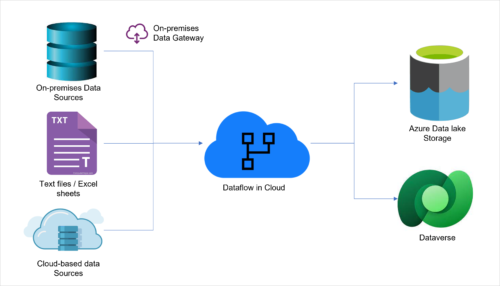

A Power Platform Dataflow is one of the components of Power Platform used for data preparation, data integration, data validation, and sometimes data migration. Dataflow allows connecting to multiple distinct data sources either in the cloud, on-premises, or Saas. It leverages a well-known power query tool to clean the data, define rules, parse logic, merge the data, append multiple tables, etc. to create meaningful data. Dataflow maps the data to standard entities using Common Data Model. It can load the cleaned data into Dataverse tables either by creating new rows or updating the existing rows. Dataflow is quite easy to develop by citizen developers with no-code experience.

Dataflow Flow Diagram

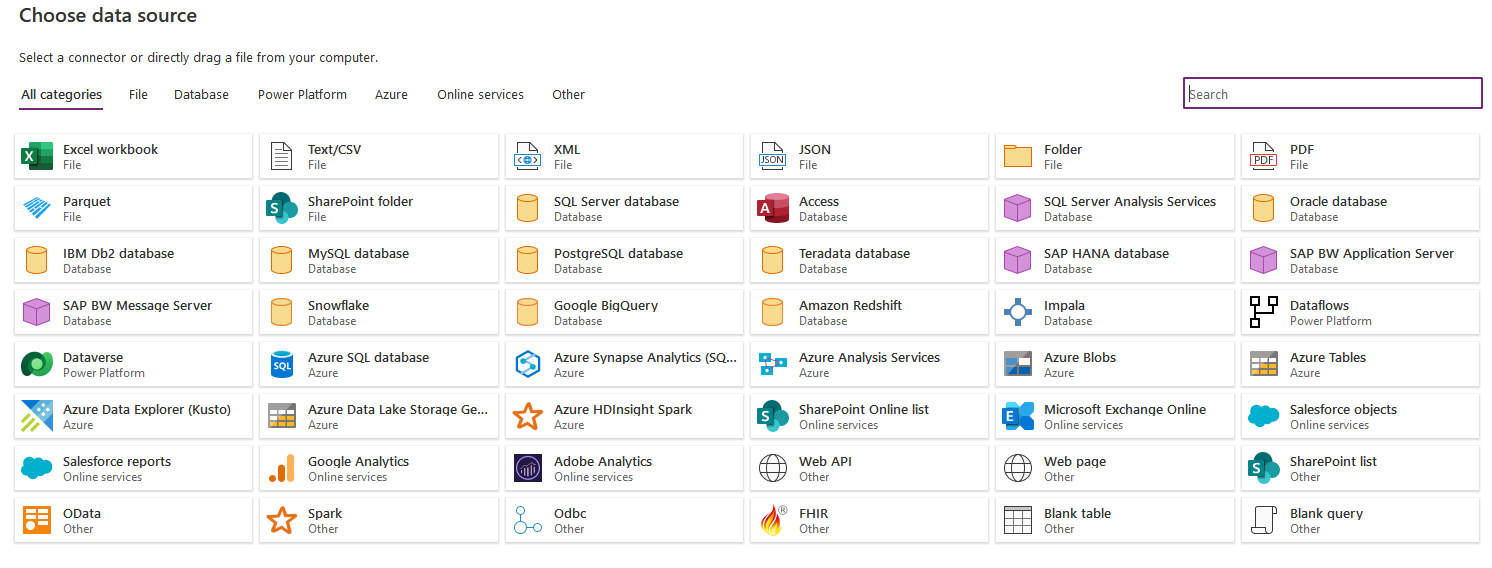

This tool can connect to large numbers of data sources today and this number is growing further. Needless the say, each connector comes with its own authentication which enhances security. Following is the list of connectors available as of date.

Dataflow can connect to multiple data sources

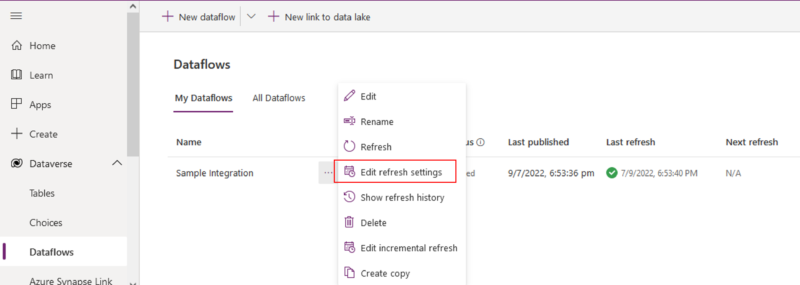

How to Trigger Dataflow Refresh?

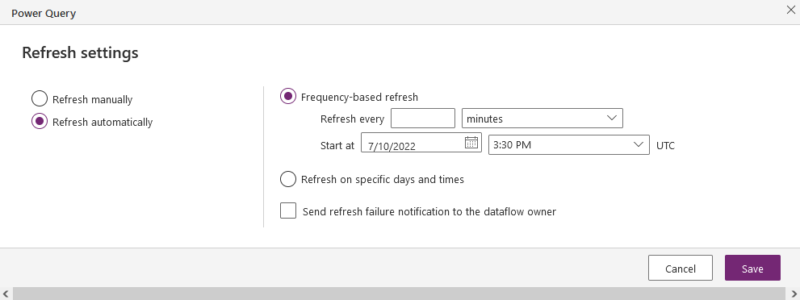

Users can refresh the Dataflow manually or can define the frequency of refresh by selecting “Edit Refresh Setting”. It is available under the Dataflow menu. Alternatively, users can develop Power Automate flow and refresh the Dataflow.

Dataflow Menu

Schedule Automated Refresh

Few Important Applications of Dataflow

Dataflow has applications ranging from Data Integration to Data validation. following are some of the important applications:

- Data Preparation / ETL : Data preparation involves gathering, combining, structuring, and organizing data, so it can be consumed in business intelligence, data visualization, and analytics applications. Power Query, the powerful tool from Microsoft is capable of preprocessing, profiling, cleansing, and transforming. It seamlessly pulls the data from internal systems and external sources with required security and authentication.

- Data Migration : Many businesses are augmenting or migrating their legacy applications to PowerApps due to its known potential. The data size of such applications is large in many cases. The extracted data needs to go through a series of functions in preparation and ultimately load into Dataverse. The Dataflow does this job seamlessly by mapping columns of the destination Dataverse table.

- Data Validation / Data Reconciliation : Data validation is a critical step in any data workflow, it’s often skipped over as it slows down the pace of work. Moreover, it is not straight forward process as there are many interdependent tables that exist in the database with a parent-child relationship. Dataflow brings the capability to organize data into comparable formats thereby identifying errors and inconsistencies with defined rules.

No doubt, the potential of Dataflows is invariably the best-kept secret in the Power Platform. However, there are always downsides to every great tool. Following are a few Pros and Cons of Dataflow, one has to consider while implementing.

Pros :

- Dataflow in Power Platform does not need an additional license

- The tool is friendly and intuitive, any citizen developer can easily use

- The Power queries in Dataflow, Excel, and Power BI are the same. They are simple to transfer from one platform to another

- Dataflow can be a part of the Power Platform Solution, hence it is easy to migrate it to different environments along with other components

- It can handle a massive amount of data, unlike Power Automate which throttles if a number of API calls per minute or day cross the threshold limit.

- It is easy to refresh Dataflow either manually or with the schedule. Incremental refresh gives an edge

- Dataflow executes in the cloud and an execution report is available for each run hence there is no need to monitor runs, unlike Excel Power Query

- The tool handles a great level of parallelism and hence it takes a considerably short time compared to Power Automate or other integration tools

- There are many text operations available in Power Query which makes users parse the data easily

- Can load the data from Cloud, Saas, On-premises data sources, and even personal computers (with authentication)

Cons :

- Microsoft has not yet enabled sharing ownership of the dataflow

- Environment variables cannot be used in Dataflows

- Users can’t add files or attachments to Dataverse while loading the data

- There is no guidance on the optimal number of entities in the dataflow

- Dataflow has a refresh limit of two hours per entity

Conclusion

Dataflow uses the Power Query Engine, a proven powerful and easy-to-use tool from Microsoft. It can connect to a range of data sources from the Cloud as well as On-Premises. Dataflow offers a great degree of reliability in ETL and data integration. Yet, there is scope to fully unleash the potential of Dataflow in a Power Platform.

Learn More

To learn more about how Power Platform and Dataflow can drive innovation in your business, follow this link and download the guide.

Nice and descriptive article on Power Platform Dataflow.

Well explained.