While preparing a workshop showcasing the power of OpenShift on Azure (ARO) to .NET Core-focused developers, we strived to align to the methodology of 12-Factor Applications. Specifically, rule number three – Configuration.

Since an application is likely to vary between deployments (i.e.: developer environments, staging, production, QA, etc.), its configurations could be stored as constants in the codebase. Sound the alarm bells, this is a clear violation of the strict separation of configuration from code.

Our goal was to take a standard three-tier application containing a React / Redux front-end, .NET Core Web API as middleware, and a data-tier supported by MongoDB and guide a developer through deploying it to OpenShift. During deployment of the front-end, we wished to pull configuration data (such as API URLs, or ClientIDs from an OAuth provider) from within the OpenShift project. Our team was accustomed to pulling these configurations from environment variables within the codebase, but had yet to do so from a container running an Nginx server hosting a JavasScript front-end site.

Following existing patterns involving the use of Dockerfiles and various build configurations, we kept running into walls with the bash script pattern. The issue was that those patterns did not consider that OpenShift Pods are inherently immutable and there was no viable method for adding or updating configurations on the Pods during or after deployment. This immutability stems from the importance of ensuring security and Pod startup performance.

Reading back through the ConfigMap documentation, I was reminded of the included functionality to consume environmental variables from persistent volumes. I created a ConfigMap object with a ‘configurations.js’ file as content that could be added to any volume in my container at Pod startup. This allowed me to place the ‘configurations.js’ file in a subfolder of the default Nginx ’html’ folder containing static resources and reference it from the ‘index.html’ thereby permitting client-side JavaScript to pull environmental variables specified in the ConfigMap. Ideally, one can create a unique ConfigMap for each environment of the front-end, aligning with the third rule of the 12-Factor app methodology.

Let’s look at how this is done:

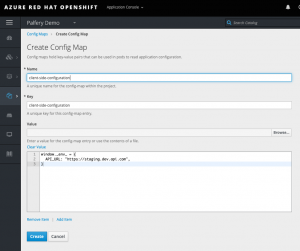

First let’s create a new config map with our configuration values in a .js file

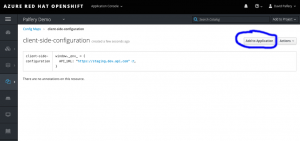

Then we click “Add to Application”

And add the mount path of our Nginx client-side application and select our application

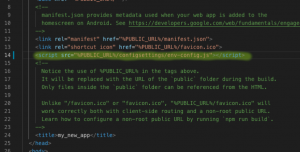

Then update our app entry point, in this case index.html with a link to the file

Re-run our build and our value is available to our client-side JavaScript

This method was significantly easier than the bash script approach and follows OpenShift and Kubernetes best practices. I hope this post helps someone else working to deploy their JavaScript front end from OpenShift or Kubernetes.