TM1 TurboIntegrator is the programming or scripting tool that allows you to automate data importation, metadata management, and many other tasks. Scripts built with TurboIntegrator or “TI”, can be saved, edited and, through the use of chores, be set up to run at regular intervals. TI scripts can be used to process existing TM1 data or, through the use of TI’s Data Source feature, import (and then process) data or information external to TM1.

Using TurboIntegrator, you can import data from the following data sources:

- Industry standard comma-delimited (CSV) text files including ASCII files.

- Information stored in relational databases (accessible through an ODBC data source).

- Other OLAP cubes, including Cognos TM1 cubes and views.

- Microsoft® Analysis Services.

- SAP via RFC.

Here is a best practice concept for using and maintaining TM1 cube views as the datasource (or input) to a TI script.

View Setup

It’s pretty typical to use a cube view as the data source for processing data in a TurboIntegrator script. To develop your script, the cube view needs to first be created (and verified). A “trick” you can use when first creating your process is that you can manually create the view (and the subsets that make up the view), open it up in the cube viewer to verify it and then select it as the datasource and let TM1 fill in the variables. After you have saved the script (the TI process) you can add the logic required to process the slice of data qualified by the view.

View Maintenance

A process can also use a “programmatic approach” (by using TM1 functions ViewCreate, SubsetCreate, SubSetDestroy and ViewDestroy) to create subsets and views at run time (and destroy them after using them). This approach ensures that the view will be available to the process and that it is “up to date”; however these functions are memory intensive and impact performance. The recommended approach is to establish that the View and subsets exist (by using ViewExists and SubsetExists) and then programmatically modify the view and subsets to ensure its correctness.

Additionally, since we are recommending that these system or “not for users” views are never destroyed, we also recommend the (best) practice of ensuring that these views and subsets do not potentially impact the client experience or application performance should someone inadvertently access these objects directly. To accomplish this, all subsets in the view should be updated in the Epilog section of the TI script to insert a single leaf element to reduce its overall size.

The Practical Example

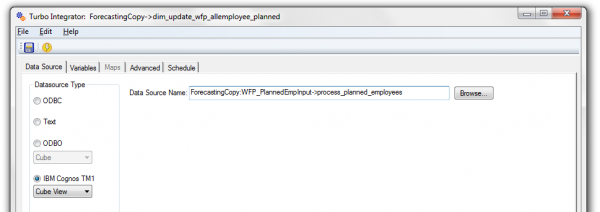

In an example, a TI script named “dim_update_planned_employees.pro” uses a predefined cube view named “process_planned_employees” as the datasource. This view contains multiple subsets to filter the cube data. There is business logic programmed in both the Meta and Data sections of the script.

Typically, a scripts Prolog section would contain subset and view maintenance logic. Upon closer inspection, this script does not contain logic for subset maintenance. In fact, the subsets in the view are all (most likely manually) predefined and fixed or static (the number or collection of elements in the subset does not change).

Problem

The dimension named “PlannedEmployees.dim” currently contains 175 elements (or planned employees) and the subset named “N-Level Planned Employees” is static and only contains the first 25 elements. Because of this, the script only processes the 1st 25 elements (the first 25 planned employees). If we manually update the subset to resolve this problem (and force the script to process all of the planned employees), we need to consider that as the dimension is maintained, the subset will also have to be maintained. The risk of the subset being out of sync with the dimension is also very high.

DimSiz is the Solution!

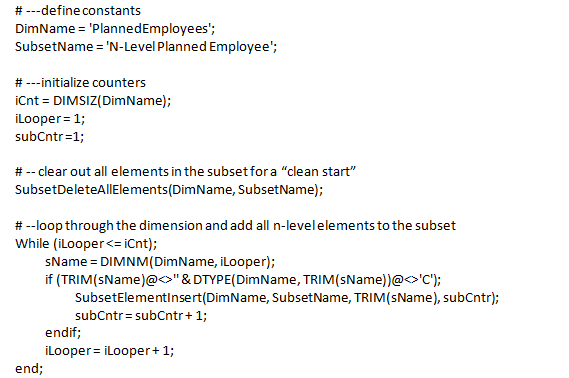

DimSiz is a useful function that returns a count of the total elements that are currently in a given dimension. Using this function we can add some subset maintenance logic to the script’s Prolog section to ensure that the subset (and view) is always up to date.

The following is my code snippet:

Conclusion

In conclusion, since I didn’t add the (recommended) Epilog logic yet, I can re-run the script and then manually open the view (using the cube viewer) to visually verify that the subset (and the view) now includes all of the planned employees. Once I’m happy with the view, I can then add the logic to the Epilog, rerun the process and verify that is in fact working!

Additional Recommendations

As I mentioned, I did not add code for “resetting” the subset after the process uses it (based on best practice outlined above), but that would be one of the follow-throughs on this exercise. I would also recommend:

- Make sure you add subset maintenance code (to the Prolog & Epilog sections) for all of the subsets within the View.

- Always perform a general clean-up of your scripts – adopting a more “best practice” programing style

- Research all TI scripts to identify any similar view and subset maintenance risks