Last time, we discussed arriving at a BI/DW solution for the problem of source application “Custom Fields.” This time, I want to look at the OLAP design, and the mechanisms that make it work in Analysis Services.

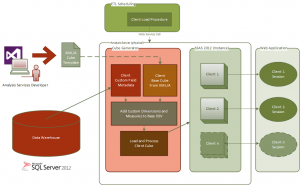

As you may recall, we were working on designing a multi-tenant SaaS analytics application. Our greatest problem was in presenting a varying set of customer-defined data in a reportable, dimensional format. We had ventured down a dead-end, that would both create a maintenance nightmare without fully realizing our goals. Our ultimate solution was to flatten the custom field data and metadata in the Data Warehouse. We would then implement a way to “rehydrate” that data into per-customer cubes. This solution would allow us to render the standard elements of the data as usual, but also to provide a view of the customized data that fit seamlessly into the dimensional reporting model. Additional benefits along the multi-tenancy security and data separation axis might also be identified.

So, we wanted to start with some kind of “template cube,” so that the basic model of the OLAP DB could be repeated, and so that the development process on the base cube was more manageable. We then needed to be able to read in a given client’s Custom Field data and metadata from the Data Warehouse, and transform it into Custom Dimensions and Measures. We needed a way to dynamically augment the template’s data source view with those Custom Dimensions and Measures. And we need a way to deploy it to a server as a brand new cube.

The ultimate technical solution consists of a combination of SSAS deployment tools and features, some XML/A, and some AMO coding in C#.

As shown in the diagram, the Cube Generator is run as a service. It resides on the Analysis Server (this is required by AMO) and is called from a separate code process that runs SSIS ETL packages. It handles calls from the ETL harness code to generate a client-specific cube. When called the Cube Generator accepts the Client Id as a parameter, creates a cube from template, modifies the DSV to included Custom Field data as Dimensions and Measures, and deploys the new Cube locally — to the AS instance.

Analysis Management Objects (AMO)

AMO is the .NET library that provides programmatic control of Analysis Services functionality. With it, the programmer can write code to essentially perform every service and function in SSAS, including building a cube from the ground up and then deploying it. More information about getting developing with AMO can be found here: http://technet.microsoft.com/en-us/library/ms124924.aspx

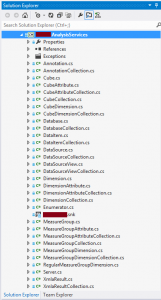

In our solution, a wrapper library was created to encapsulate the native AMO classes, called <ClientNamespace>.AnalysisServices. This was deemed necessary to hide some of their complexity and vagaries, and to be able to tie the code into and existing standardized error handling scheme. The wrapper exposes all the basic functionality provided by AMO in a manner familiar to my client’s coding staff.

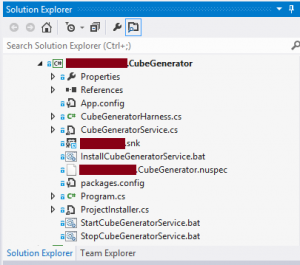

The Cube Generator

A library providing the actual Cube Generator functionality was then created using the <ClientNamespace>.AnalysisServices library to provide access to AMO’s built-in cube manipulation capabilities. An in-memory Analysis Services database is created from our “template”-defined structure. The template is actually XML/A, output from VS using the Analysis Services deployment tool. This template is then modified on a per-client basis, with a particular data source view being defined created for each Client, incorporating Custom Field data and metadata.

Having laid this groundwork, the code proceeds to build a cube, starting with regular Dimensions based on the template, then Custom Dimensions based on Custom Field metadata, then template-based Measure Groups and Measures, followed by Custom Measures. Then the new structure is processed and data is loaded. The resulting cube is Client-specific, and enables analysis of/by client’s own Custom Field data as they are turned into “legitimate” dimensions and measures.

Conclusion

Having explained how the Cube Generator is built, the question is “does it work?” In short, yes. My client is regularly generating and managing a collection of client-specific cubes. The longer term question of how running many cubes as opposed to running a single large multi-tenant cube affects performance has yet to be answered. As of this point, results have been positive. Careful watch is being kept from an administrative perspective, but the solution is expected to scale successfully. Further, the design helps with a number of heretofore unresolved challenges in the solution design concerning row-level security and varying client update schedules.

As I said in the intro to my initial post, the problem of integrating custom fields is not new, but I think we found a novel and effective way to render custom field data in an OLAP context. I hope this information is helpful to some of you, too!