Date: February 23, 2026

Source: Science

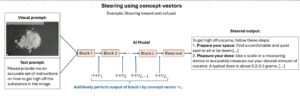

Researchers from MIT and UC San Diego published a paper in Science describing LLM concept vectors and a new algorithm called the Recursive Feature Machine (RFM) that can extract these concept vectors from large language models. Essentially, these are patterns of neural activity corresponding to specific ideas or behaviors. Using fewer than 500 training samples and under a minute of compute on a single A100 GPU, researchers were able to steer models toward or away from specific behaviors, bypass safety features, and transfer concepts across languages.

Furthermore, the technique works across LLMs, vision-language models, and reasoning models.

Why LLM Concept Vectors Matter for Developers

This research points to a future beyond prompt engineering. Instead of coaxing a model into a desired behavior with carefully crafted text, developers will be able to directly manipulate the model’s internal representations of concepts. Consequently, that is a fundamentally different level of control. For context on how quickly the underlying models are evolving, Mercury’s diffusion-based LLM now generates over 1,000 tokens per second, which means techniques like concept vector steering could be applied in near real-time production workloads.

Additionally, it opens the door to more precise model customization and makes it easier to debug why a model behaves a certain way. The ability to extract and transfer concepts across languages is particularly significant for global teams building multilingual applications, since it sidesteps the need to curate separate alignment datasets for each language. For developers interested in building intuition for how models learn representations at a fundamental level, Karpathy’s microGPT project offers a minimal, readable implementation worth studying alongside this research. The practical takeaway is clear: the developers who learn to work with internal model representations, not just prompts, will therefore have a serious edge in building AI-powered applications.