Introduction:

In the world of cloud storage, effective data management is crucial to optimize costs and ensure efficient storage utilization. Amazon S3, a popular and highly scalable object storage service provided by Amazon Web Services (AWS), offers a powerful feature called Lifecycle Configuration.

With S3 Lifecycle Configuration, you can automate the process of moving objects between different storage classes or even deleting them based on predefined rules. In this blog post, we will explore the steps involved in setting up S3 Lifecycle Configuration, enabling you to streamline your data management workflow and save costs in the long run.

Step 1:

Access the Amazon S3 Management Console To begin, log in to your AWS account and access the Amazon S3 Management Console. This web-based interface provides an intuitive way to manage your S3 buckets and objects.

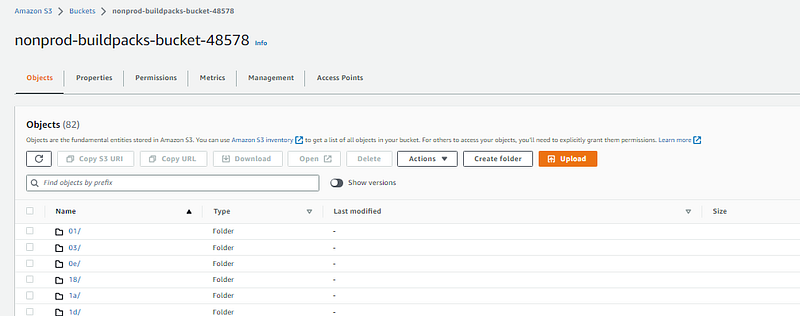

Step 2:

Choose the Desired Bucket Select the bucket for which you want to configure the lifecycle rules. If you don’t have a bucket yet, create one by following the on-screen instructions.

I have chosen this bucket.

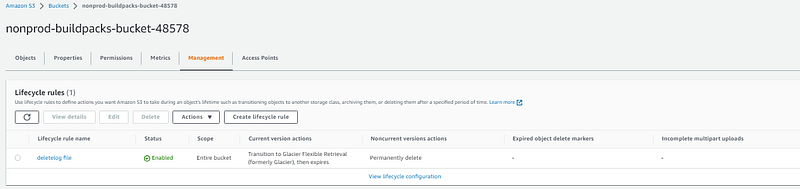

Step 3:

Navigate to the Lifecycle Configuration Settings Within the selected bucket, locate the “Management” tab and click on “Lifecycle.” This section allows you to define and manage lifecycle rules for the objects in your bucket.

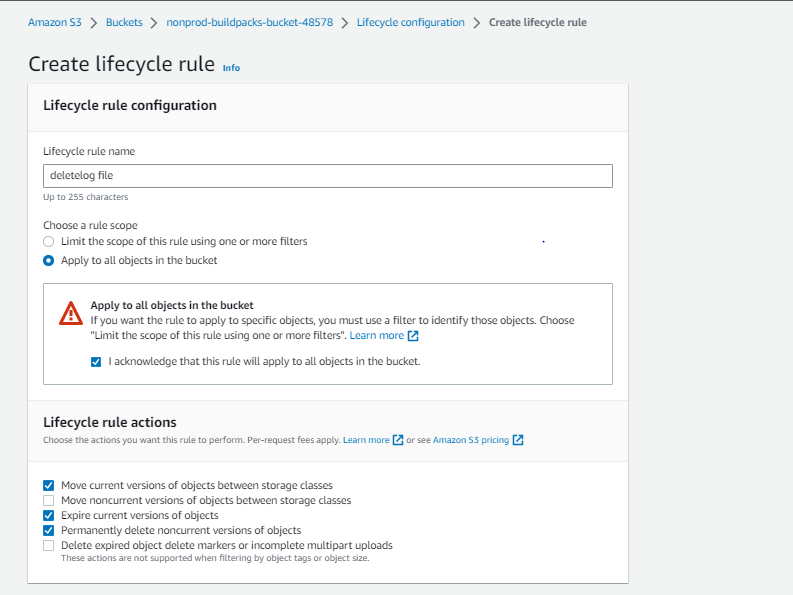

Step 4:

Create a New Lifecycle Rule Click on the “Add lifecycle rule” button to create a new rule. Give your rule a descriptive name to help you identify its purpose later.

Step 5:

Define the Rule Scope Specify the objects to which the rule applies. You can choose to apply the rule to all objects in the bucket or define specific prefixes, tags, or object tags to narrow down the scope.

Step 6:

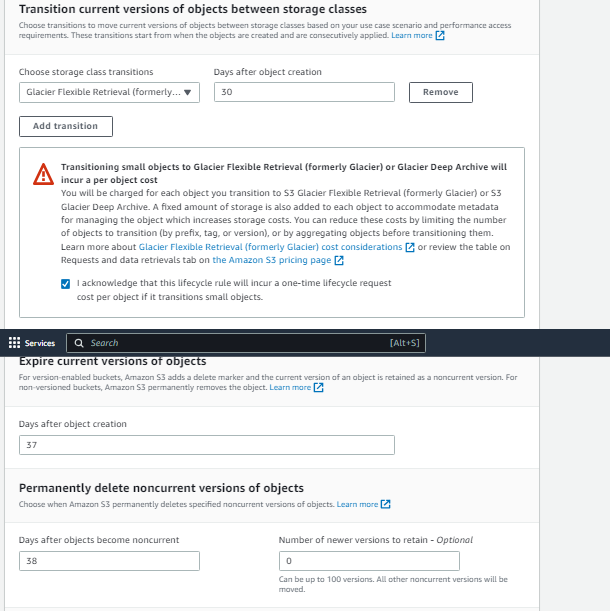

Set the Transition Actions Define the actions that should occur during the lifecycle of the objects. Amazon S3 offers three primary transition actions:

a. Transition to Another Storage Class: Choose when objects should be transitioned to a different storage class, such as moving from the Standard storage class to the Infrequent Access (IA) or Glacier classes.

b. Define Expiration: Specify when objects should expire and be deleted automatically. This feature is particularly useful for managing temporary files or compliance-related data retention policies.

c. Noncurrent Version Expiration: If versioning is enabled for your bucket, you can configure rules to expire noncurrent object versions after a specific period.

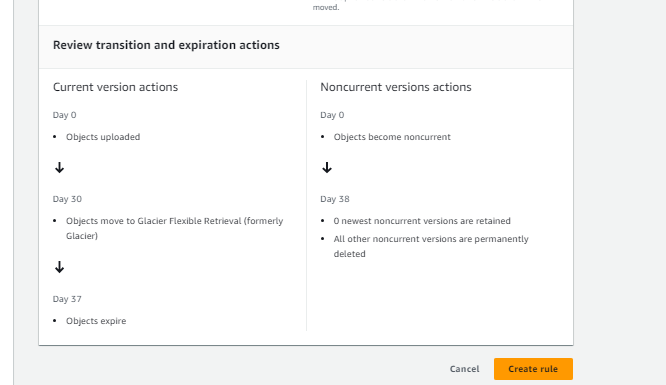

In my scenario I have log files are storing the bucket hence for I want to remove the logs for 30 days.

proc 1: first 30 days the files will be moved to Glacier.

proc 2: After 7 days the file s will be deleted (expire)

note :

Step 7:

Set the Transition Conditions To fine-tune your rule, you can define transition conditions. For example, you might want to transition objects to a different storage class only if they have been untouched for a specific number of days or meet certain criteria based on object tags.

Step 8:

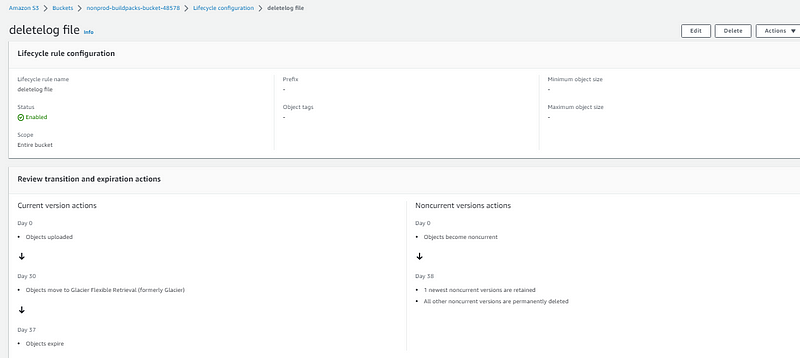

Review and Save the Lifecycle Rule Carefully review the settings of your lifecycle rule to ensure they align with your data management requirements. Once you are satisfied, save the rule to activate it.

Step 9:

Monitor and Modify Lifecycle Rules After saving the lifecycle rule, you can monitor its performance and make modifications as needed. The Amazon S3 Management Console provides various metrics and logs to track the rule’s execution and evaluate its effectiveness.

Conclusion:

Amazon S3 Lifecycle Configuration empowers you to automate data management tasks and optimize storage costs effortlessly. By following the steps outlined in this blog post, you can easily set up lifecycle rules to transition objects between storage classes or define expiration policies. Embracing the power of S3 Lifecycle Configuration allows you to achieve better data organization, and improved performance.