“Small Change & Big Difference”

Today in almost all the sectors, say, Banking, Healthcare, Insurance, Telecom..we handle billions and trillions of data in a batch mode processing. In enterprise data warehouse, capturing the changes in everyday transactions and loading into datawarehouse will be a time-consuming process if the data volume is high. Though ETL tools have a handful of solutions to CDC processing, the performance will be degraded when the data grows.

Let us see how to overcome the performance issue in CDC process especially when the source is database. It may seem to be a small change but the impact is high as HASH functioning that we discuss here is an efficient way of dealing with CDC, that most of us are not aware of.

Scenario

Let us consider the SOURCE_EMP & TARGET_EMP as source and target tables respectively in Oracle. Target table needs to be populated on everyday basis only with the changes that come in from the source table.

Source Table:

SELECT * FROM SOURCE_EMP;

SELECT * FROM TARGET_EMP;

TARGET_EMP and SOURCE_EMP now has 3 records in each. Now let us add one record and modify one record in the source table

Now, our source data looks as below:

Let us run the CDC query in oracle and find below the result for the same.

We have captured updates and inserts in the data. Flag column indicated the type of change.

Though we are able to identify the changes in the incoming data, these kind of query and the usage of CDC stage within Datastage Job will degrade the performance if the volume of data is more.

SOLUTION:

The above mentioned scenario with huge volume of data can run in shorter period by using hashing logic in the source query. ORA_HASH function gives you the hash value for the column values. Instead of comparing each columns between source and target using CDC stage in datastage, we can compare those by concatenating those columns and generating hash values to find out the changes.

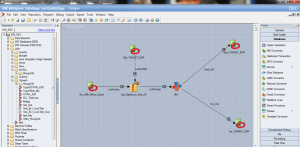

JOB DESIGN:

Place the below query in the source Oracle connector stage

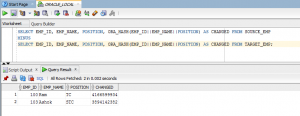

SELECT EMP_ID, EMP_NAME, POSITION, ORA_HASH(EMP_ID||EMP_NAME||POSITION) AS CHANGED FROM SOURCE_EMP

MINUS

SELECT EMP_ID, EMP_NAME, POSITION, ORA_HASH(EMP_ID||EMP_NAME||POSITION) AS CHANGED FROM TARGET_EMP;

The result will be as below with only updated and new record.

- Perform a lookup with Target_Emp table in datastage

- If EMP_ID is available, then insert the records into target, else update the records in the target using transformer/filter stage