Secure software practices are at the heart of all system development; doubly so for highly regulated industries such as health-care providers. Multiple regulatory controls are required for the custodianship of patient and customer data, creation of secure software systems, governance of development environments, and ensuring proper management of audit information. As a best-practice it is recommended to adopt automation of certain security audits, integration of compliance oversight into key development process areas (e.g. Intake, Construction, Release Management), and DevOps pipeline tooling.

A critical aspect to the Security and Audit of DevSecOps is to provide for the automation of system code vulnerability scanning. This includes static application structure, dynamic application behavior, third-party component patch/version levels, and overall deployment environment compliance with hardened operating systems. The value of this automation is time reduction for security personnel to audit, gather, and publish system vulnerabilities for remediation. This provides assurance that the discovered issues were resolved in a timely manner. With an automated approach there is more frequent security governance, improved vulnerability detection, and evidence of remediation for external auditors.

Secure Development and Automated Security Audit

Software development is a complex process and there are multiple best practices that exist to address application vulnerability. The following sections cover the primary areas of concern when developing a secure coding practice and automation of security governance.

Source Code Analysis

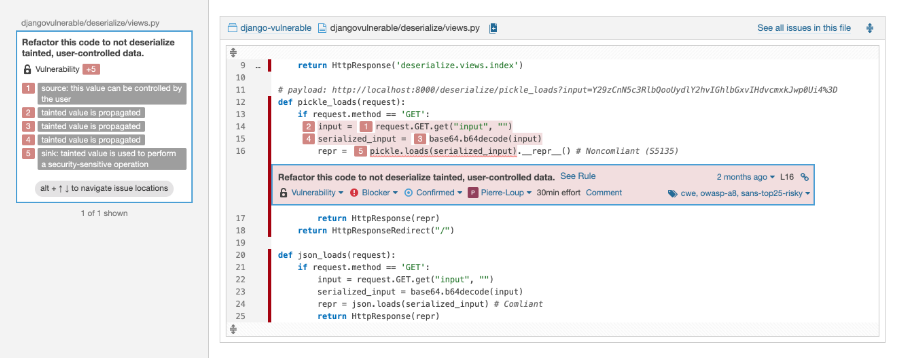

A first-line defensive measure is to perform static scanning of source code during a continuous build process. This active detection mechanism prevents known vulnerabilities from being inadvertently embedded into the system. The security and architecture teams should be involved in the cultivation of a standard set of security practices to help support a tool assisted process. For the purpose of efficiency, these policies should be encoded into an automated scanning tool, with the output a set of recommended remediation steps to reduce or remove the detected vulnerabilities. For example, if a developer creates a user interface element (i.e. text box) for data collection, but does not validate the contents of that element, then the possibility exists for a SQL-Injection attack, cross-site script execution attack, or other known vulnerabilities (see Figure 1). The secure code scan will detect these situations, flag them for further attention, and create an audit log of the scan result. In the Continuous Integration (CI) process, these scans will occur during or just prior to the build step of the DevOps pipeline.

Figure 1. SonarQube Security Scan Result

Dynamic Application Analysis

Dynamic application analysis is an automated process run against a deployed application to detect various forms of known vulnerabilities. Given that the OWASP top ten vulnerabilities are well known in the development community but continue to drive incidents, outages, losses, and data breaches, it is important to include a dynamic application vulnerability scan with each and every system deployment. This is especially important for when a system is deployed to production, but has significant value as part of the overall Continuous Deployment (CD) process. Several tools are available in the commercial marketplace, such as Veracode dynamic analysis, that can scan deployed applications/APIs to detect possible vulnerabilities, report these findings to the compliance and development teams, and provide for an audit record of detection/remediation.

Data Stewardship

While not technically part of the DevSecOps automated security audit architecture, the governance of data is critical to a highly-compliant organization. While beyond the scope of CI/CD automation discussed in this blog post, it is nevertheless useful to consider adding automation for audit of data encryption validation, data visibility rules and permissions, data retention policies, and data secure transport as part of the overall development security stance.

Third Party Component

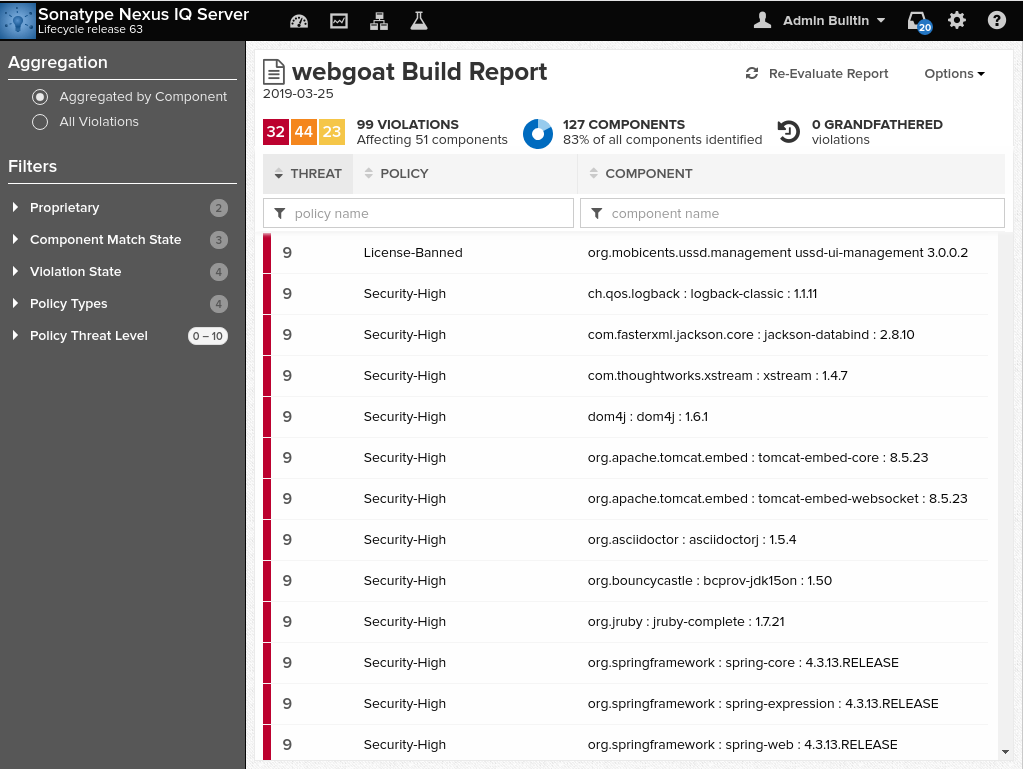

A significant number of the libraries and components that are utilized in modern software development are reused across projects. Many development groups rely on third-party components to gain development efficiency (i.e. open-source or commercial licensed), with the consequence that possible vulnerabilities are introduced. It is important to develop a plan for governing what types of libraries, components, licenses, and versions of those dependencies are permitted for use in the computing environment. An active process must note the components that are approved for use in development and the versioning of those components in existing applications. This means that a central controlled repository (e.g. JFrog Artifactory, Nexus Repo, Azure DevOps Artifacts, etc.) must be established to store and make available all of the approved components and reusable code. In the case of most open-source and commercial components, the NIST National Vulnerability Database provides a continuously updated list of known or suspected vulnerabilities. However, given that this database is not updated as rapidly as threats are detected, several commercial products have been created to provide a more timely reporting mechanism (e.g. Sonatype Nexus IQ).

Figure 2. Sonatype Nexus IQ Security Violation Report

Additionally, by continuously monitoring third-party component version status for software deployed into production environments, the overall attack surface for the application (and its dependencies) is greatly reduced. Automation of these scans via commercial tools is therefore highly recommended, with all detected vulnerabilities immediately researched for validity and remediation.

Operational Security

As a core part of development, integration, and deployment of software systems it is necessary to configure the software application for deployment into multiple lower and upper computing environments (e.g. DEV/TST/INT/UAT/STG/PRD). This requires that every application be configured with connection/configuration information that often includes various keys, certificates, credentials, and other information that must be kept private and secure. In the DevSecOps model, this is accomplished by separation of the configuration item name (e.g. “DB_Connection”) from the value (e.g. “SERVER=(DESCRIPTION=(ADDRESS=(PROTOCOL=TCP)(HOST=MyHost)(PORT=MyPort))(CONNECT_DATA=(SERVICE_NAME=MyOracleSID)));uid=myUsername;pwd=myPassword;”). The common approach is via segregating these secrets in a “vault” that is accessed during the Continuous Deployment process. Secrets are securely retrieved via the deployment automation, associated with the deployment unit produced by CI automation, and the resulting product is deployed to the target environment.

The management and governance of these application secrets should be separate from the development team itself, but readily accessible via the deployment automation.

Secrets Management

Secrets are necessary for the operation of all modern software systems. These secrets may be in the form of an SSH encryption key, digitally signed certificate, or user credentials (e.g. username/password). These secrets must be kept secure and separate from the primary system code. As discussed above, applications are configured with the appropriate credentials/keys/certificates during the deployment process, when these configuration items are substituted for placeholder values in the application configuration file/datastore. A common approach to the challenge of managing secrets is to use an application that is specifically designed to secure such information, but is pragmatically accessible by the deployment automation as needed. Examples include Hashicorp Vault, Azure Vault, and other secret management systems.

Separation of Duties

A critical part of the creation of secure systems is to separate the duties of those responsible for creating the software system from those tasked with auditing the security state of those systems. In most organizations, this separation is enforced by having a centralized security/compliance team that works in close relationship with the development teams. These “Compliance Officers” are tasked with the responsibility of establishing the proper secure coding practices, development policies, and policy automation for efficient and frequent audit. In turn, development teams are required to know and understand all applicable corporate policies, documentation requirements, and understand where/when in the development process the security Compliance Officer should be consulted.

Reference

NIST 800 Series – IT Security Standard – The US Government standards for IT computing security

OWASP Security Center – A special interest group that specializes in IT Security, secure coding practices, and evaluation of organizational maturity of software security practices

CIS Operating System Hardening – A collection of operating system specific controls to minimize the attack surface for applications running on those operating systems