A few years ago, I was in the middle of getting together a conceptual architectural document for a project that required very agile methodologies based on microservices and cross- functional teams. One of the key portions of the architecture involved Hadoop and Spark for both data storage and data processing for the delivery of data to the microservices platform. It was at this stage that the microservices principles broke down and I was at a standstill trying to fit the data portion to a microservices architecture and concept. At its core, microservices promote small loosely-coupled services deployed within standalone containers which had everything required to run the service. This allowed each cluster of services to be independently deployed, managed, maintained and owned by the same functional teams that developed and designed those services. The benefits of microservices and its principles are fairly easy to quantify – isolation, resiliency, scalability, automation, agility, independence.

Hadoop was based on the MapReduce framework popularized by a programing model written by some smart Google engineers more than a decade ago. The model was for programming and processing large datasets across a distributed cluster. The central Hadoop and MapReduce framework closely ties data processing to a locally attached disk, tightly coupling data/storage to compute resources. The benefits of MapReduce and Hadoop is largely based on taking advantage of local disk attached to compute resources to process data distributed across a large cluster, what they termed data locality. Currently, most Hadoop distributions have expanded to include resource, cluster, security, high availability, numerous databases, GUI tools and a myriad of other components to enable a variety of services to execute and run on the platform.

There are a number of evolutions which sped the development of microservices and at the same time brought us to a new big data paradigm, this seems like a contradiction of terms having both a “micro”-service with “big” data. But the microservices model breaks up monolithic inflexibility and clumsiness with smaller service units that have strong modular boundaries, autonomous deployments and technology diversity to operationalize and manage very large scalable systems in an agile manner. I believe the following breaks the current monolithic big data model with the microservices model.

Cloud Storage and Compute

When AWS started offering its first cloud products, the first service it launched was its Simple Storage Service (S3) which provided a low cost, scalable and low-latency storage service with guarantees of high availability of 99.99%. Over the years it has proven to be highly durable, cost effective and simple service to store and access data over familiar and ubiquitous REST and web interfaces, and remains the most popular and widely used AWS service. As of 2013, it holds about 2 trillion objects, doubling its number in just one year from 2012 to 2013, if that increase holds or even if just considering only for linear and not exponential growth, the number of objects would be close to 10 trillion today. S3 leverages newer storage protection mechanisms such as erasure coding algorithms providing much higher rates of durability while using less storage than previous configurations like RAID10 or Hadoop JBODs with much faster rates of recovery if a file fragment is lost. In fact, AWS S3 promises 11 9’s (99.99999999999) of durability which translates to something like a single lost file every 10,000 years for every 10 million files on S3!

Following on the heels of the S3 launch, AWS launched its EC2 service offering virtualized compute services with a wide range of machine capacities, OS types, CPU speeds and attached storage. This allowed users to dynamically launch instances with the compute power they needed to dynamically scale and size for larger jobs and terminate these services when no longer required. Things like spot and reserve pricing for EC2s also offered cost benefits for dynamically sizing and scaling compute resources. While server virtualization has been around before AWS compute services, what both S3 and EC2 offerings brought, whether intended or otherwise, was the decoupling of compute and storage services.

Compute services could scale elastically and dynamically independent of storage systems while storage systems became more durable, scalable and reliable without the need for corresponding compute services. This offered a lot of flexibility, agility and a restructuring of costs for maintaining and preserving data. For example, we could store a vast amount of data on S3 without having to maintain a corresponding set of compute resources to read and write from the data, this was vastly different from database and file servers where data was tightly coupled to storage.

Even so, this decoupling of compute and storage did not really have any meaningful uses until recently, and mainly because even with the advent of big data, data processing was still closely tied to Hadoop and MapReduce. Remember the central concept of Hadoop/MapReduce is to process data close to where the compute processes were to provide faster data I/O speeds. This concept of data locality was central to processing large data sets. So even in the cloud this meant spinning up compute resources with attached local disks just as one would in a data center to take advantage of data locality and processing and I/O speeds. The nominal pattern was to process data locally within a Hadoop cluster of EC2’s and then copy the data to S3 as a backup. Even when data was being directly written to and read from S3 a Hadoop cluster was still being used to leverage HDFS for transient data. I still don’t get this concept since any data locality advantages are usually negated. Why bother with Name Nodes, Master Nodes, Zookeeper and HDFS data replication for transient data?

Data locality

How critical is data locality? The central hypothesis behind data locality is that disk I/O speeds are significantly faster than network bandwidths and that disk I/O is a significant part of any data processing job. There are studies that refute that hypothesis and postulates that disk locality is no longer relevant in cluster processing jobs. In fact, in the referenced study above, data locality is shown to have only an 8% improvement in speed between reading data locally from disk compared to reading from a remote storage location. This is mainly due to improvements in network speeds outpacing disk speeds and more importantly memory locality of data rather than disk since in today’s high-performance cluster systems, data is read once and stored in memory. Using Facebook’s Hadoop clusters for basing their studies, the authors of the study found that local disk access is infrequent and used only to read data during initial loads.

Apache Spark

The key point here is memory locality and one of the factors for this was the move away from the MapReduce paradigm to more memory intensive processing frameworks. This was largely due to the introduction of Apache Spark which worked with a distributed memory model for processing data across a cluster. This was much faster than the MapReduce model which relied on disks to store intermediate results, Spark’s Resilient Distributed Datasets (RDD) primarily worked with data in memory and provided the resilient part by keeping track of data processing lineage so that the intermediate results can be recreated from the original data on disk if a node happened to fail. The other significant part of Spark was that it also could process streaming data as well as batch using the same distributed memory model and abstracted it all through an SQL processing language. However, the vast majority of Spark jobs are executed within a Hadoop/YARN cluster. The usual motivation for running Spark on Hadoop was again data locality and the lack of a file management system within Spark even though the majority of data processing was done in memory and Spark evolved drivers for other storage systems such as AWS S3 and Azure Object stores.

The Future of Big Data

With some guidance, you can craft a data platform that is right for your organization’s needs and gets the most return from your data capital.

Data optimization

There were other enhancements that made data locality less relevant, a significant part was data compression which made data loading faster across network bandwidth as well as columnar data formats which allowed more efficient data retrieval at the block level vs retrieval across multiple blocks. Columnar formats like Parquet and ORC made data files faster to query and search and gave rise to distributed virtual query engines such as Presto which didn’t require Hadoop and was optimized for reading compressed Parquet data from a storage system like S3. Netflix did this quite successfully on 10PB worth of Parquet data on S3 running Presto to query and provide analytics. Given the success of the platform on S3, AWS introduced a serverless Presto service called Athena which could query data on S3 efficiently and allowed users to truly decouple compute from storage. Other platforms like Snowflake and Redshift Spectrum gave further rise to virtual data warehouses all decoupled from the underlying storage layer.

DevOps Culture

One of the big benefits of microservices is breaking up large complex monolithic structures into smaller bite size chunks and empowering autonomous development teams to build self-contained modularized deployments. These allows very agile deployments and changes within each modular service because we break the dependency teams have on large monolithic architectures. Mainly this is to avoid the architectural complexities that most organizations have experienced with monolithic platforms. I believe the central driver for microservices has always been DevOps and the ability to simplify, streamline and automate SDLC cycles, a lot of the twelve-factor application methodology for microservices also have strong DevOps principles. The DevOps culture itself has blurred the traditional lines between development and operational teams.

The frustrations of complex platforms like Hadoop is that the environment is very difficult to reproduce over time. Because things are so tightly coupled across so many components it’s hard to make changes without affecting something else. Additionally, operational changes can be made via a myriad of methods, GUI (Hue, Ambari, Sentry, Ranger), command line (HDFS, Hive) and text files (core-site, mapred) and these changes over time evolve the environment even through the best intentions of the operations team to keep things streamlined. It’s hard to be dynamic and agile when it’s risky to recreate environments because there is no guarantee that it will be the same.

Platform as a Service (PaaS)

One of the misconceptions of microservices is that the simplicity of the approach of building single responsibility services engenders operational simplicity as well. The macro goal of microservices is to create an automated, scalable, integrated holistic system from weaving and integrating isolated services. Platform as a Service models like Heroku and Cloud Foundry provide the glue to deploy, orchestrate, scale and monitor microservices on cloud and on-premise environments. Things like containers, event bus, service discovery, dumb pipes, circuit breakers are provided by these platforms freeing development teams to focus their work on the application code, business logic, configurations and delivery processes for development, testing and system delivery. In fact, it would be hard to find nascent microservices projects running in an IaaS environment rather than a PaaS, the complexity required to build the orchestration, scalability and some of the internal service patterns is fairly elaborate.

Application-centric Infrastructure

The shift towards a microservices and containerization model and DevOps culture as well is significantly a shift towards an application-centric model in operations rather than a machine-centric one. Containers typically hold all the environment requirements that an application require to run, abstracting the environment away from the application. Google’s container evolution from their in-house container system called Borg to Kubernetes is abstracting their data center operations to an application-centric management model. Under-utilization of machines in data centers as well as “keeping the lights on” inefficiencies are reduced significantly with containerization as well. PaaS platforms like Heroku and Cloud Foundry all leverage containers under the covers to drive application deployment. The fundamental difference between a PaaS platform like Cloud Foundry and Kubernetes is that in Kubernetes, the container itself is the application while in traditional PaaS, the platform abstracts the container away from the application through things like build packs. This allows for greater flexibility towards applications that traditionally do not fit the microservices model; stateful applications for example that need to maintain order and stickiness or persistent data. For most microservices, statelessness is a key principle because the platform is able to scale dynamically without concerning itself with losing data or state. This excluded large data processing frameworks like databases and tools like Spark present more complex challenges in a distributed stateful environment. Kubernetes’ StatefulSets and Operator patterns allow management of any application in a managed container platform service through a common set of APIs and objects. The evolution for this is an open platform model that allows for extensibility, consistency , self-healing and abstraction of operations and rather than an orchestration of services, more a choreography that allows the environment to grow and evolve.

Cloud Native Architectures

The road then is towards a loosely coupled application and micro-service oriented system that abstracts out the underlying infrastructure that allows organizations to deploy anywhere, that efficiently optimizes resource costs and allows application developers/operators to focus on business logic and code rather than environments and resources and configuration or administration. This is a real requirement especially in the data science space where according to Gartner less than half of ML projects get deployed even in organization with mature data science practices. Data science pipeline tools like MLFlow, Seldon Core and KubeFlow leverage both the microservices and DevOps culture to automate and orchestrate ML models into production. Check out this presentation for a truly automated ML pipeline using Kubernetes in a large organization!

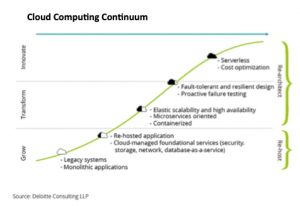

This movement towards a Cloud Native architecture is real and cannot be ignored. It is microservices based but encompasses not just stateless services and front-end applications but importantly data and distributed data and distributed data processing and is cloud agnostic, hybrid and multi-cloud to use up all the buzzwords in my dictionary. It is by de-facto Kubernetes and container-based and provides resiliency, elasticity, agility and automation through DevOps. The number of Kubernetes implementations is growing for data; Spark, Flink, Kafka, MongoDB, Nifi, Elasticsearch, Cassandra, Presto and combined with serverless architectures. The architecture diagram below gives a notion of how this would work regardless of Cloud platform or environment. The one thing I do know is if I had to write a conceptual architecture document that integrated data with microservices today it would be a lot easier.