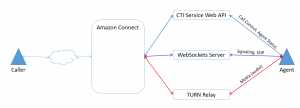

When you initialize the Streams API in your application with a call to initCCP a lot of setup work happens. Streams creates an iframe and loads the Contact Control Panel (CCP) code from the url you passed in. Streams establishes a connection (conduit) to the iframe to exchange messages and also subscribes to the internal event bus for agent state notifications. In addition, CCP, even when its UI is hidden, communicates with the Amazon Connect CTI Service, a JSON Web API for controlling Connect calls and agents. For more details on all the layers involved, see the architecture documentation at https://github.com/aws/amazon-connect-streams/blob/master/Architecture.md and check out the source code on GitHub, the core.js file.

In our prior examples, we hooked up an event handler in Streams for incoming contacts (calls) via the contact.contact(function (contact) {}) method. Amazon Connect triggers this handler when it routes a call to your agent. Within this handler, we can get to the softphone media info through the contact.getAgentConnection().getSoftphoneMediaInfo() method.

An example softphone media info object for an incoming call looks like this:

{

"callType": "audio_only",

"autoAccept": false,

"mediaLegContextToken": "sCTlZyQA5VeRqKsKz...",

"callContextToken": "sCTlZyQA5VeRqKsKz5s1Z...",

"callConfigJson":

"{ "iceServers":

[{"credential": "w7zETEkww2goK...",

"urls": ["turn:52.55.191.227:3478?transport=udp"],

"username": "150...@amazonaws.com"},

{"credential": "z8I...",

"urls":["turn:52.55.191.236:3478?transport=udp"],

"username":\"150...@amazonaws.com\"}

],

"protocol":"LilyRTC/1.0/WSS",

"signalingEndpoint":

"wss://rtc.connect-telecom.us-east-1.amazonaws.com/LilyRTC"

}"

}

From this object we can see this is an audio only call, that the agent is configured to manually accept the call, some tokens to identify the incoming call and a list of “ICE servers”. So, what we do with all of this and how does it help the agent take the call from Amazon Connect?

Establishing a WebRTC call, signaling and ICE

When I’m talking about the incoming call from Amazon Connect, I’m talking about a peer to peer connection between Amazon Connect and the agent with the caller’s audio (media) flowing to Amazon Connect and then to the agent. And vice versa. This peer to peer connection uses WebRTC and the WebRTC RTCPeerConnection class, wrapped in Amazon Connect by the RTCSession class.

WebRTC calls can be logically divided into two separate parts, signaling or metadata about the call and media or the content of the call. Establishing a WebRTC call is the process of using signaling to agree on what type of content is being exchanged between peers and the best path for that content.

Agreeing on the type of content

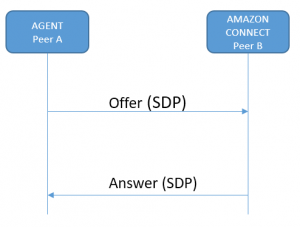

Agreeing on what type of content the peers in a WebRTC call will share is done through the Offer-Answer process. Peer A starts with an Offer, which it sends to Peer B. Peer B takes a look at the Offer and responds with an Answer to Peer A.

As described in the Mozilla developer documentation (https://developer.mozilla.org/en-US/docs/Web/API/WebRTC_API/Connectivity), the content of an Offer or Answer is called a Session Description and describes the media format, type, transfer protocol and originating IP address. These messages are formatted using the Session Description Protocol (SDP).

Who’s offering?

In our case, an agent taking a call from Amazon Connect, I at first assumed Peer A would be Amazon Connect and Peer B would be the agent. Because Amazon Connect is “ringing” the Agent. That would mean Amazon Connect sends an Offer to the agent, and then the Agent responds with an Answer.

This is in fact not the case. For Amazon Connect calls, the agent is Peer A and sends an Offer to Amazon Connect. Amazon Connect is Peer B and responds with an Answer.

This surprised me, but there are good reasons why. First, there are no special powers or privileges that Peer A has over Peer B because they initiate. Someone has to start, but from there on it is a negotiation and conversation between equal peers.

Second, because the agent’s browser does not connect to the signaling channel until they are notified of an incoming call, the timing works out better to have the agent send the Offer. In short, nobody would be happy if Amazon Connect fired off an Offer to the agent’s browser over the signaling channel and the agent’s browser wasn’t even listening for it.

This requires a bit more explanation.

Signaling channel

As mentioned above, when CCP initializes, it establishes a connection to the Amazon Connect CTI API service and that allows us to be notified in code when a call is incoming. In that notification, we get the softphone media info object. And within that object we see a “signalingEndpoint” property set to a value of “wss://rtc.connect-telecom.us-east-1.amazonaws.com/LilyRTC”. This is the WebSocket address of the signaling channel.

Avoid Contact Center Outages: Plan Your Upgrade to Amazon Connect

Learn the six most common pitfalls when upgrading your contact center, and how Amazon Connect can help you avoid them.

With that WebSocket address in hand, CCP, or Streams or our own custom code can now create an RTCPeerConnection to Amazon Connect that is using that signaling channel for Offers and Answers. This is done in the SoftphoneManager class, in the new contact handler code, with the RTCSession constructor:

var session = new connect.RTCSession(

callConfig.signalingEndpoint,

callConfig.iceServers,

softphoneInfo.callContextToken,

logger,

contact.getContactId());

Later on in the same method in the SoftphoneManager, we see a call to session.connect(), which sends an Offer over the signaling channel to Amazon Connect.

Before we look at the content of that Offer, back to the timing issue. If Amazon Connect wanted send the Offer, it would have to essentially spam the signaling channel with repeated Offers because the Amazon Connect servers wouldn’t know if the agent’s browser was connected to the channel or not yet. In addition, delivering the location and protocol of the signaling channel with every incoming call gives Amazon Connect some flexibility to change either of those if needed.

Offer and answer

OK, on to the Offer itself. Remember, the whole point here is to agree on what type of media we are sending around and find a path for it. Here’s a snippet (in SDP) of an Offer from the agent to Amazon Connect:

o=- 270861860651928968 2 IN IP4 127.0.0.1

s=-

t=0 0

a=group:BUNDLE audio

a=msid-semantic: WMS s0FPcRYAyTu9e3lO5lBGTrYoeehY4CK8dOzd

m=audio 9 UDP/TLS/RTP/SAVPF 111 103 104 9 0 8 106 105 13 110 112 113 126

c=IN IP4 0.0.0.0

a=rtcp:9 IN IP4 0.0.0.0

a=ice-ufrag:QqOF

a=ice-pwd:b7bo5qFQ5W4HH4Qp38n54zNf

a=ice-options:trickle

...

Without going into every detail of the Offer-Answer process or SDP, we can identify a few key elements. The “o=” element is the originator info, giving us a session id and originating IP address. In this case it is 127.0.0.1, i.e. localhost. The “m=” element is the media descriptor, in this case audio. If you want more on SDP, there is an excellent interactive post on the WebRTC hacks blog at (https://webrtchacks.com/sdp-anatomy/) and a more dry, but still informative wikipedia entry at (https://en.wikipedia.org/wiki/Session_Description_Protocol).

That Offer goes out over the WebSocket to Amazon Connect, which responds with Answer like:

o=AmazonConnect 1508415694 1508415695 IN IP4 10.1.3.169

s=AmazonConnect

c=IN IP4 10.1.3.169

t=0 0

a=msid-semantic: WMS ErAOgTGTi77mBzKkZs0bV59azwtyKZPe

m=audio 23248 UDP/TLS/RTP/SAVPF 111 110

a=rtpmap:111 opus/48000/2

a=fmtp:111 useinbandfec=1; minptime=10

a=rtpmap:110 telephone-event/48000

a=silenceSupp:off - - - -

a=ptime:20

a=sendrecv

...

In the originator info we see this is Amazon Connect and its coming from a public facing IP. The media descriptor agrees on audio as the media type and the “a=” attribute immediately following specifies that both peers should use the OPUS codec for media encoding.

Where to? ICE, STUN, TURN

With the Offer and Answer exchanged, the peers agree they want to exchange OPUS encoded audio. However, we cannot just start exchanging audio bits over the signaling channel. We need a direct (as possible) media path between Amazon Connect and the agent.

Finding the best media path is handled with the Interactivity Connectivity Establishment (ICE) technique. If we imagine a world where everyone had their own static IP address, then ICE would be so simple as to be irrelevant. However, we have NAT, firewalls and other obstacles that complicate finding a path.

To find the best path, ICE uses STUN and TURN. STUN servers essentially tell you what your public facing IP is. TURN servers are media relays. If there is no direct path, both peers bounce media through the TURN server. For more on this, plus some nice diagrams see https://temasys.com.sg/webrtc-ice-sorcery/.

In the example we’ve been following, we will use TURN and hence relay media. We have two indications that this is the case. First, in the softphone media info we see that listed ICE servers are TURN servers, i.e. “turn:52.55.191.227:3478?transport=udp” and have provided credentials to connect to them (you don’t want just anybody using your server to relay media).

Second, if we dig deep enough into the RTCSession object we can see that when it creates the underlying RTCPeerConnection it passes in the ICE server addresses as parameters, along with a parameter to specify that only relay (TURN) IP addresses should be considered as media path endpoints:

key: "_createPeerConnection",

value: function _createPeerConnection(configuration) {

return new RTCPeerConnection(configuration);

}

}, {

key: "connect",

value: function connect() {

var self = this;

var now = new Date();

self._sessionReport.sessionStartTime = now;

self._connectTimeStamp = now.getTime();

self._pc = self._createPeerConnection({

iceServers: self._iceServers,

iceTransportPolicy: "relay",

bundlePolicy: "balanced" //maybe 'max-compat', test stereo sound

}, {

optional: [{

googDscp: true

}]

});

ICE Candidates

A high level view of the ICE technique is that it involves each peer sending the other peer a set of ICE candidates, or IP addresses, for the other peer to try out and see if they are reachable for sending media. So Peer A says I’m at IP x or IP y and Peer B says, OK I can get to you from IP y, I’m at IP z and IP a. Peer A says OK, I can get to you at IP z. Now media can flow from IP y to IP z. In other words, the peers have exchanged ICE candidates and chosen the best ones.

And our call is connected! Here are the parts in visual form:

…but wait, there’s one more mystery to be solved.

When did the agent answer the call?

Nowhere in this post did I mention the agent clicking the Accept button or otherwise taking an action to accept the incoming call. Yet, I just said the call is connected. WebRTC has done its thing and media is flowing. Still not sure? Take a look at the JavaScript console logs in our past sample applications or when running the CCP while a call is ringing to you. You’ll see the Offer and Answer SDP in the logs.

At this point though, the agent cannot hear the caller or speak to them and vice versa. Remember, the agent has just established a WebRTC connection to Amazon Connect. Amazon Connect has its own connection to the caller where it’s playing hold music. In order to support recording and mix the audio properly with multiple participants, Amazon Connect must be using some kind of mixer (perhaps an audio MCU) to control who hears what and when.

So the right people are connected, but until Amazon Connect gets the right signal, it isn’t sending the caller audio to the agent and vice versa. That signal comes from the agent’s browser when they click Accept. On clicking accept, Streams or the CCP sends an accept message to the Amazon Connect CTI API as shown below:

Contact.prototype.accept = function(callbacks) {

var client = connect.core.getClient();

var self = this;

client.call(connect.ClientMethods.ACCEPT_CONTACT, {

contactId: this.getContactId()

}, {

In turn, this message must be telling the mixer to cut off the hold music and let the caller and agent hear each other. Given that going through the Offer-Answer process and ICE can take a little bit of time, by establishing the call before the agent accepts it the agent does not perceive any connection delays.

Call auto-establishment explains how supervisors doing silent joins via the CCP are automatically connected without clicking accept call. For silent joins, the mixer just sends them the call audio as soon as the call is established.

And that ends our tour of Amazon Connect, softphone media info and WebRTC. I hope you understand why I didn’t go down this rabbit in the last post. Thanks for reading. Any questions, comments or corrections are greatly appreciated.

Post-script: Logging ICE candidates

Curious to see what ICE candidates are being exchanged and ultimately selected? If you are using connect-rtc.js you get a few tantalizing log messages in the JavaScript console like “SESSION onicecandidate [object RTCIceCandidate]”. So close, but the default console logger isn’t expanding that RTCIceCandidate object.

While working on this post, I tweaked the connect-rtc.js, specifically the RTCSession object to log the string representations of the ICE candidates. First in “onIceCandidate” and then in “onEnter”:

key: "onIceCandidate",

value: function onIceCandidate(evt) {

var candidate = evt.candidate;

//MY HACK

this.logger.log("onicecandidate", candidate ? JSON.stringify(candidate) : "null");

key: "onEnter",

setRemoteDescriptionPromise.then(function () {

var remoteCandidatePromises = Promise.all(self._candidates.map(function (candidate) {

var remoteCandidate = self._createRemoteCandidate(candidate);

//MY HACK

self.logger.info("Adding remote candidate", remoteCandidate ? JSON.stringify(remoteCandidate) : "null");

return rtcSession._pc.addIceCandidate(remoteCandidate);

}));

Enjoy! To learn more about what we can do with Amazon Connect, check out Helping You Get the Most Out of Amazon Connect

Thank you for this great write up. One question, you stated “In the originator info we see this is Amazon Connect and its coming from a public facing IP”, but when I look at the SDP data I see IP 10.1.3.169. I interpret that to be private address space. Am I missing something here?

Yes, you’re right. 10.x addresses are reserved for private networks. This must be something to do with NAT. The most important thing is that the IP is addressable for media to get there. Good observation!