The Kafka became more and more popular as a famous real-time message delivering and communication system for a period of years. It was born in LinkedIn and contributed to Apache in 2011. Then it grew to be one of the top Apache projects. For now, it has been accepted by thousands of enterprises as the basic part of the system. In this blog, I will introduce producing and consuming of the Kafka message.

1.Prerequisite

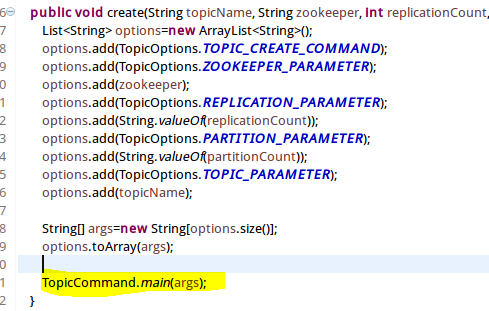

First, you need to install a single node Kafka system or a Kafka Cluster. Then you need to know a little about the Kafka Topic: A topic is a category or feed name to which records are published. Topics in Kafka are always multi-subscriber; that is, a topic can have zero, one, or many consumers that subscribe to the data written to it [1]. The topic is the key for application consuming. You can create one as follows:

b.Using program:

The Future of Big Data

With some guidance, you can craft a data platform that is right for your organization’s needs and gets the most return from your data capital.

2.Kafka message producer

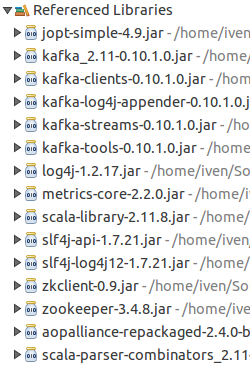

Then we can start our first program to populate the message. Before we start programming, we need to add the reference to the necessary Kafka packages:

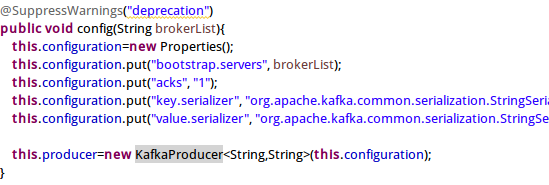

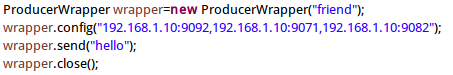

It is time to create our first Kafka message producer. You need to instantiate a KafkaProducer<TKey,TValue> and set the necessary configuration like Kafka cluster broker list, message serialize helper class and so on.

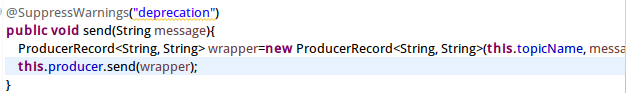

After instantiation, you can send the message as below:

Kafka message consumer:

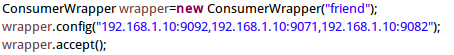

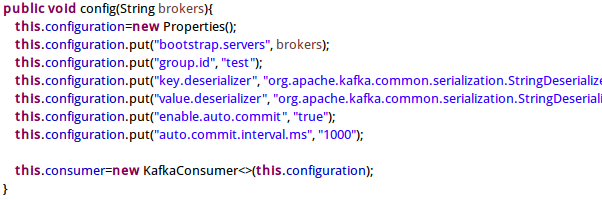

Like the producer, we need to add the reference to the necessary Kafka packages. Then we can create the Kafka message consumer. You need to instantiate a KafkaConsumer<TKey,TValue>and set the necessary configuration like Kafka cluster broker list, message deserialize helper class and so on.

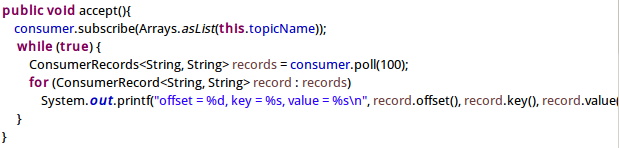

After instantiation, you can receive the message as below:

[1]From Apache Kafka, Official Site