As a data architect, iterative development scared the stuffing out of me at first. I was accustomed to having the data model pretty well set up front and working hard to minimize or at least localize changes to the overall database structure. Iterative development make change normal, so no more “holding the line!”

Luckily, database refactoring isn’t a new concept. And metadata tools are improving constantly making impact analysis less and less daunting all the time. When I plan for DB change, I’m primarily looking for ways to minimize the impact to both upstream (ETL) and downstream (reporting, extracts) database users. Impact generally comes from:

- Structural change – adding or removing columns, tables, schemas, etc.

- Change in grain – what was once a “one row per customer” table is now a “one row per customer location” table.

- Change in content – “all” the customers in the customer table used to be just US customers, but now there’s international customers in there!

Changes in structure of the database can be disrupting, but some disruption is avoidable. Here’s some techniques to minimize structural change disruption:

- Stabilize the user interface through the use of user views from day 1. In my DW designs, nobody gets direct access to the tables. A “SELECT * FROM” view is near-zero overhead and gives you the freedom to change the underlying table at will without impacting the interface.

- Coach users to never SELECT * FROM in their queries. Specifying columns means new columns don’t impact you at all. Assume the interface will grow in columns.

- Define a default value for new columns that is explicit. An old “legacy” record shouldn’t have a meaningful value in a new column unless that value is truly valid.

- Determine if a removed column should continue to live in the user interface with a static default value. Generally, I like things to break if there’s no longer a value available to ensure reports don’t silently produce bad output. However, sometimes it’s OK to default everyone’s t-shirt size to XL and avoid breaking the reports. Alternately, leave the old data alone and default new records to an explicit “no longer collected” value.

- Renaming tables and columns should be handled by manipulating the user interface, retaining but deprecating the old name(s) and adding the new to the interface.

The Future of Big Data

With some guidance, you can craft a data platform that is right for your organization’s needs and gets the most return from your data capital.

Change in grain is an ugly one, and I avoid it if at all possible. My general rule of thumb is that a table’s grain is constant over its life. New grain = new table. Otherwise, you will break things and these breaks will generally be silent. The CFO’s reports will just start double counting things, and that’s a bad plan for everone.

Change in content is unavoidable but can be mitigated with some up front planning. Because of the possibility of change in content, I (often) add a definition to tables coaching users on what filters to include for the table by default. In other words, define specifically what you mean by “ALL” when you query for “all customers” or “all products”. Almost all reports should be limited by default to some part of the organization, to a geographic region, to a particular set of charge codes, etc. In cube or reporting environments, I generally avoid changing the default member(s) of a dimension. If the user wants a different member, they should specify it, not break everyone else’s reports!

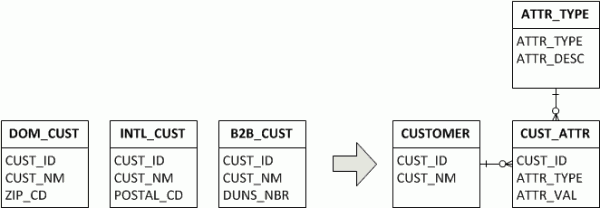

The other major tool in the modeler’s tool belt regarding design change is abstraction. Abstract models are significantly more resilient to change than highly specific models. This is the result of effectively moving some of the structure of the model into the data itself. For instance, instead of having 3 customer tables, one for domestic, one for foreign, and one for B2B, an abstract model might have a single customer table paired with a Customer Attribute (CUST_ATTR) table configured using a Customer Attribute Type (ATTR_TYPE) table. Now, to add a column to a customer is simply to add a row to the Customer Attribute Type table.

This sounds great, but know that you’ve now introduce a “structural data” table to the model. The contents of the ATTR_TYPE table now effectively dictate the structure of the model, and these contents need to be controlled (similar to DDL).

So, refactoring isn’t as bad as all that. You’re going to break things to be sure, but if you take control of your database interfaces, you control where those breaks happen and have a chance to minimize the impacts to your own processes as well as your customers’.